November 23, 2016

For organizers, it’s fundamental: no decisions about us, without us. But the opposite is happening in technology that local and movement organizing groups use today, and the challenges we are about to face will increase exponentially with the incoming Trump administration. Bias is rampant online. The way to fix it is to insure the people controlling access to the internet and who are writing the apps, algorithms and software that govern bigger chunks of our lives share at least some of our values and vision for racial equity. Below are some ripped-from-the-headlines highlights to be aware of, and stories from PTP’s efforts to turn the tables on racial bias in the tech landscape while building representation of for people of color actively engaged in social change work.

From Your Feed to Police HQ?

A solidarity rally in St. Paul, MN Sept. 13. Law enforcement have been using Geofeedia and geographic-based listening services like it to monitor activists clustered around a hot spot, such as Standing Rock Reservation in North Dakota. Many people “checked in” remotely to confuse such services recently. Photo by Fibonacci Blue via Flickr

If you checked in at to Standing Rock, you already know law enforcement is systematically monitoring social media to know what activists plan. Some limits are in place and more may be coming. In October the California ACLU revealed that while tech titans at Twitter and Facebook offered support for Black Lives Matter, their companies offered a feed of activists’ social media streams directly to law enforcement agencies around the country. Geofeedia, a Chicago-based company, sells location-based analytics. The ACLU, Center for Media Justice and Color of Change found that feeds from locations in Oakland and Baltimore were among the data it sold to its many law enforcement customers, without obtaining the proper warrants. After the groups wrote to Twitter and Facebook and its subsidiary Instagram, they saw quick results:

Instagram cut off Geofeedia’s access to public user posts, and Facebook has cut its access to a topic-based feed of public user posts. Twitter has also taken some recent steps to rein in Geofeedia though it has not ended the data relationship.

For the record, Geofeedia points out the same tools that make it ideal for surveillance can be of vital help for first responders in a catastrophe. But “clear policies and guidelines to prevent the inappropriate use of our software” they say are in place are not readily available on their website. Would a more diverse group of employees have been more sensitive to activists or more supportive of their CEOs’ positions? More detailed coverage of what happened can be found in Techcrunch, Forbes, and Daily Dot.

How algorithms learn racism

National nonprofit news platform ProPublica has been analyzing flaws in big data programs for months. Artificial intelligences are “learning” bias from the news they analyze, journalists found. Screenshot from ProPublica video “Machine Bias”[/caption]

Nonprofit news platform ProPublica has been reporting on issues associated with big data for about a year. To say that they found some problems is an understatement. Actually, you can’t make this stuff up: _

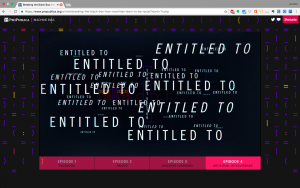

In one study researchers trained an AI program with 3 million words from Google News articles. This is what they found: The closest word to the query black male returns ‘assaulted,’ while the response to white male is ’entitled to.’

In other words, artificial intelligence is only as good as the patterns we teach it, as Pro Publica reporter Julia Angwin says in a video component of a piece on “How Machines Learn To Be Racist.”

You can try this at home. Angwin and colleagues created a database of articles from six sets of news outlets. Enter a word, and an AI system they built will find synonyms based on each database. The difference between each set of news outlets is subtle but instructive.

Along these lines, last November at a Ford Foundation convening called Can computers be racist? Big data, inequality, and discrimination, Harvard professor Latanya Sweeney, director of the university’s Data Privacy Lab, shared similar research. She found repeated incidence of racial bias in her national study of 120,000 Internet search ads.

Her study looked at Google Adword buys made by companies that provide criminal background checks. The results of the study showed that when a search was performed on a name that was “racially associated” with the black community, the results were much more likely to be accompanied by an ad suggesting that the person had a criminal record—regardless of whether or not they did.

Would Sweeney’s research have come out differently if the IT sector workforce were less than three-quarters men and two thirds white (Bureau of Labor Statistics’ Current Population Survey, 2015 employed persons, “computer and mathematical occupations”)? This topic is much on people’s minds. Sweeney is online here. Another good source is former “quant” trader Cathy O’Neill, who left Wall Street and joined Occupy Wall Street. She authors the blog mathbabe and just wrote Weapons of Math Destruction.

People help answer this problem

Kendra Moyer

To Kendra Moyer - who as one of several participants in PTP’s People of Color Techies program knows this problem from multiple angles - the problem is clear and the solution succinct:

We have to be the means of production even within the IT industry, because it’s one of the areas of where white supremacy is being practiced.

Moyer was in the first class of People of Color Techies that PTP and May First/People Link hosted along with partners including the Praxis Project. She found her way to using tech in movement organizing by working with the Occupy Boston Information Technology team in 2012.

What she found frustrating, though, was a lack of viable employment for IT work at nonprofit and advocacy organizations, especially as a woman of color whose prime support was her own salary.

After several years in Boston, she still had not found a sustainable position in advocacy or nonprofits, so she returned home to Detroit. Now Moyer works at Henry Ford College part time, providing IT Support in the Communications Division. “You still push through your frustrations, but you have to find a way to afford to do it,” Moyer says.

Moyer is looking to be one of the people who helps bring about this solution. As a staff person at Henry Ford, she gets free tuition: “[A]nd I’m going to be taking IT classes,” she says. “If I don’t take advantage of that, I have no one to blame but myself.”

Tomás Aguilar

PTP’s own Tomás Aguilar, our trainer & PowerBase specialist, also came out of that first group of People of Color Techies. Previously an organizer, the program helped him to gain technology experience that he could use as an organizer.

Organizing for language justice, which led him to work on a Spanish-language Drupal website, was part of his original connection with PTP. When he learned about the POC Techies program, “I read the description and said, ‘I want to know this,’” he recalls. He was sponsored by the Praxis Project and connected with two mentors, one of whom was Jamie McClelland of MayFirst/PeopleLink and PTP.

Adding people of color to the corps of organizers and advocates doing tech-oriented work is urgent in order to add their viewpoints and experiences, he says. One of the things that most stuck with him from the process was something a mentor, Alfredo Lopez of May First, told him: the goal is not to show people how to make a form or learn PowerPoint but to learn to run servers, troubleshoot, set things up to support movements.

“I keep going back to the politics of servers,” Aguilar says. “Servers control everything that we see on the Internet or in our organization. Learning this level of technology can enable people of color to also be actors when running the Internet. It’s about the work, about running the infrastructure AND it’s about the tech. It’s how we’ll do this and it’s the whole point of the People of Color techies program.”

This was researched and written pre-election. In some ways it’s changing everything but we don’t think it changes anything about what we found or the importance of training up a growing body of People of Color Techies._ _Does your organization have any POC Techies currently, or are you one? Want to share your story, or interested in hosting a POC Techie Fellow? Let us know by email.